We will be exploring some of the issues, curriculum tools, and materials available to educators around AI concepts in a series of blog posts in the coming months.

If you have been online lately, you’ve probably noticed a rise in the number of discussions about artificial intelligence (AI). Here’s a fact: AI technologies are already pervasive in our lives and they aren’t going away. When you use a word processing tool or text messaging app and it suggests words to you as you write, you are using AI. When you use an app to know what the weather will be like in the next 10 days, you are using AI. When you want to know how long it will take to get to a given location tomorrow morning, you are using AI. When you are watching Youtube or listening to music on an app and click on a suggested video or song, you are using AI. When you go on a website and use the chat box to get answers to your questions, you are most likely using AI. If you use translation tools such as Google Translate or DeepL, you are using AI. In education settings, most text-to-speech, speech-to-text, captioning tools, and predictive tools all use some form of AI technology.

The ChatGPT demo that generated so much attention when it was released this fall by OpenAI, and others like it, is changing the internet landscape. The search engines we know today may soon be updated to include search and chat modes that use ChatGPT and other emerging technologies. Microsoft is currently testing a version in its Bing search engine, although it is proving to be in need of some tweaking. We are beginning to see an abundance of reactions to ChatGPT and other similar technologies. Though these are murky waters, we too are wading into this current of artificial intelligence. We found this New Yorker article to provide a solid lay of the land, so we suggest you start there if you’re unfamiliar with this AI tool. Another recent and very comprehensive discussion on where this technology came from is from the MIT Technology review. The most simple answer is that ChatGPT is a chatbot that answers questions that you ask it.

But ChatGPT is really only the very tip of a large iceberg. It is just one tool of thousands in a greater landscape known as ‘artificial intelligence’. From text-to-image generators like DALL-E 2, to music generators like AIVA, AI is becoming even more widespread. In education circles, we are asking ourselves how ChatGPT will disrupt our practices. But we should be asking ourselves – what are the implications of living in a world where AI is everywhere?

Pathways for using ChatGPT as a learning tool

It has been a long time since something has caused this many immediate ripples in education – and generated such a vast spectrum of reactions to the new technology. Currently, on Facebook, there is a Quebec-based ChatGPT educators group with membership in the thousands – 3.8K and counting. The members of this group are busy discussing how to use ChatGPT in their classrooms: some people are excited about it and want to explore how it can benefit learning for students and educators alike. Others are more interested in how to prevent students from using ChatGPT in their assignments and are looking for ways to block it. They view it as a dangerous threat to the education system, as cheating, and therefore completely dishonest.

Any technological tool has the possibility to enhance or diminish the effectiveness of the teaching/learning scenario. What makes the difference between a tool being effective or not usually comes down to the pedagogical intention of the teacher, and the stance of the learner. Just like with personal devices and social media, many students will access ChatGPT at home regardless of whether teachers choose to embrace or oppose its use in their classrooms. Similarly, the presence of chatbots in students’ lives will only increase as there are already dozens of ‘education assistants’ available online. Given this reality, it’s important to have critical conversations with your students and colleagues – about ChatGPT maybe, but about how to navigate the pervasive nature of AI, certainly.

Any technological tool has the possibility to enhance or diminish the effectiveness of the teaching/learning scenario. What makes the difference between a tool being effective or not usually comes down to the pedagogical intention of the teacher, and the stance of the learner. Just like with personal devices and social media, many students will access ChatGPT at home regardless of whether teachers choose to embrace or oppose its use in their classrooms. Similarly, the presence of chatbots in students’ lives will only increase as there are already dozens of ‘education assistants’ available online. Given this reality, it’s important to have critical conversations with your students and colleagues – about ChatGPT maybe, but about how to navigate the pervasive nature of AI, certainly.

We agree that worrying too much about whether or not students will use ChatGPT to write essays is a waste of energy. Energy that would be better spent asking ourselves how we can design activities and assignments that allow students to make new connections and think critically about what they are learning. While we are only beginning to explore the use of chatbots in education, educators do not need to be experts before introducing this technology to students. ChatGPT, and the swirl of conversation around it, is an opportunity to teach process knowledge; students can learn to critically evaluate technology to nurture their digital competency. Why not harness the disruptive, and therefore engaging, power of ChatGPT and ask students to evaluate its use in their lives?

AI and the Digital Competency Framework

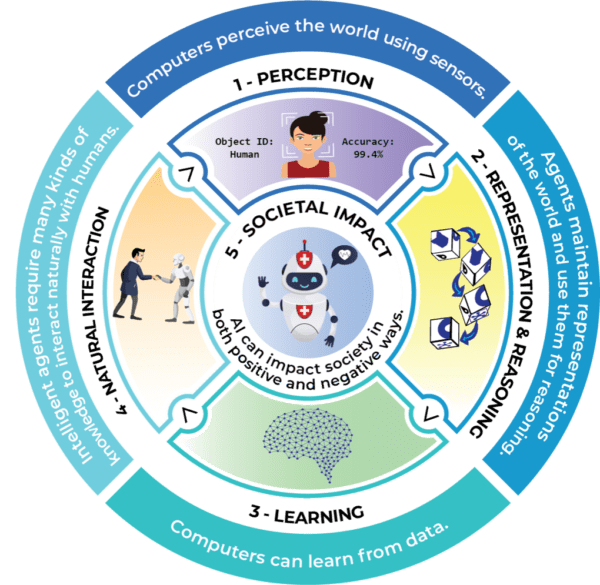

The field of AI is vast and it is difficult to know where to begin. Some groups have already begun to look at how it might be tackled with elementary and secondary students. The Artificial Intelligence Initiative for K12 has suggested Five Big Ideas in Artificial Intelligence – Perception, Representation & Reasoning, Learning, Natural Interaction and Societal Impact.

Five Big Ideas in Artificial Intelligence from AI4K12

In Quebec, the Digital Competency Framework provides some pathways to explore artificial intelligence, as does the provisional Culture and Citizenship in Quebec program.

Artificial Intelligence addresses the core dimension of ethical citizenship. Many interesting questions must be considered. Is an essay written by a chatbot cheating if I prompted it to do so? What about if the chatbot only writes my outline, which I then turn into an essay using my own words? We already struggle to understand copyright issues and digital ownership in a digital world where sharing is part of the social culture. Now we see artificial intelligence blurring these lines further. Does a text or image created by AI belong to anyone other than the individual who entered the prompt?

And there are even bigger questions to ask. At LEARN, we are much more interested in the potential of teaching students about the broader spectrum of AI and getting them to experiment with technologies that are emerging. How might AI be harnessed to help us solve the world’s critical problems in the future? What creative applications of AI are possible in the arts and media production? How will AI technologies contribute to culture?

We have to understand what emerging AI tools do well and what they don’t do well so that we can make the best use of them. In the past, the promise of technology was to free humans from drudgery, but that hasn’t been the case for everyone, as one drudgery has frequently been replaced with another. Can AI help us do tasks that are tedious so we can spend more time doing creative tasks and ones that involve more complex thinking? How can AI help us access even more complexity?

Even if students don’t become involved directly in developing AI technologies in the future, they will need to understand the issues around its ethical and responsible uses in order to be informed and active citizens.

Learners’ relationship with learning plays a large role in how they will use and approach AI tools. If learning, and not grades on assignments, is the goal, students will come up with ways of using these tools to support their learning, and not bypass it entirely. People, students included, are largely goal-driven. They will use or are already using, AI tools that help them accomplish what they want to get done – for entertainment or educational purposes. If a student’s learning stance is to get better at something, and they are rewarded for the process in which they fully engage, then using AI to cheat or bypass learning will not be an issue for them. One of our kids, a thirteen-year-old who struggles with writing, was told about ChatGPT, and that she could use it to write texts for her. She looked horrified. “Why would I want to do that? I need to learn how to write, myself!”. Repositioning the learner at the heart of their own learning will be an important part of the conversation around AI in education.

Concluding remarks

Beyond all other dimensions, AI makes critical thinking paramount in the use of digital technology. We need to decide what is a fair trade for being able to use such tools. The more we use AI, the better it becomes at emulating human habits, interactions, and everyday thinking. At what point will we tip from directing this technology to a place where the technology is directing us? We will also need to become better at identifying questionable journalism and academic research. We have already seen the effects of fake news on global health, the environment, and the political world.

The launching of the ChatGPT demo has highlighted the urgent need for information literacy and critical thinking with regard to artificial intelligence. Beyond the initial excitement and fear, we need to take a step back and gain a better understanding of the potential and pitfalls of using AI conversational tools and others for pedagogical purposes. No doubt, all kinds of players will emerge to get their share of the market – https://www.futurepedia.io lists almost 1000 tools to date. Attempting to block one site or tool is not going to solve the “problem”.

At the start of this blog post, we asked what are the implications of living in a world where AI is everywhere? We could also now ask what might be the future implications of our reliance on these now ubiquitous technologies? The contemporary philosopher Yuval Noah Harari points out that the future most of us imagine now “is merely the future of the past” (Harari, p.76) because its seeds were planted by our collective consciousness years ago. All signs point to the idea that we find ourselves at a turning point in time, with advances in AI growing quickly beyond our understanding. As with any period of great change, it is education – the deliberate fostering of critical awareness, deep understanding, and creative problem-solving – that will help us navigate the new future that is being born today.

Read More

ChatGPT is everywhere. Here’s where it came from. https://www.technologyreview.com/2023/02/08/1068068/chatgpt-is-everywhere-heres-where-it-came-from/

ChatGPT Is a Blurry JPEG of the Web. https://www.newyorker.com/tech/annals-of-technology/chatgpt-is-a-blurry-jpeg-of-the-web

Microsoft limits Bing chat to five replies to stop the AI from getting real weird. https://www.theverge.com/2023/2/17/23604906/microsoft-bing-ai-chat-limits-conversations

Artificial Intelligence Initiative for K12

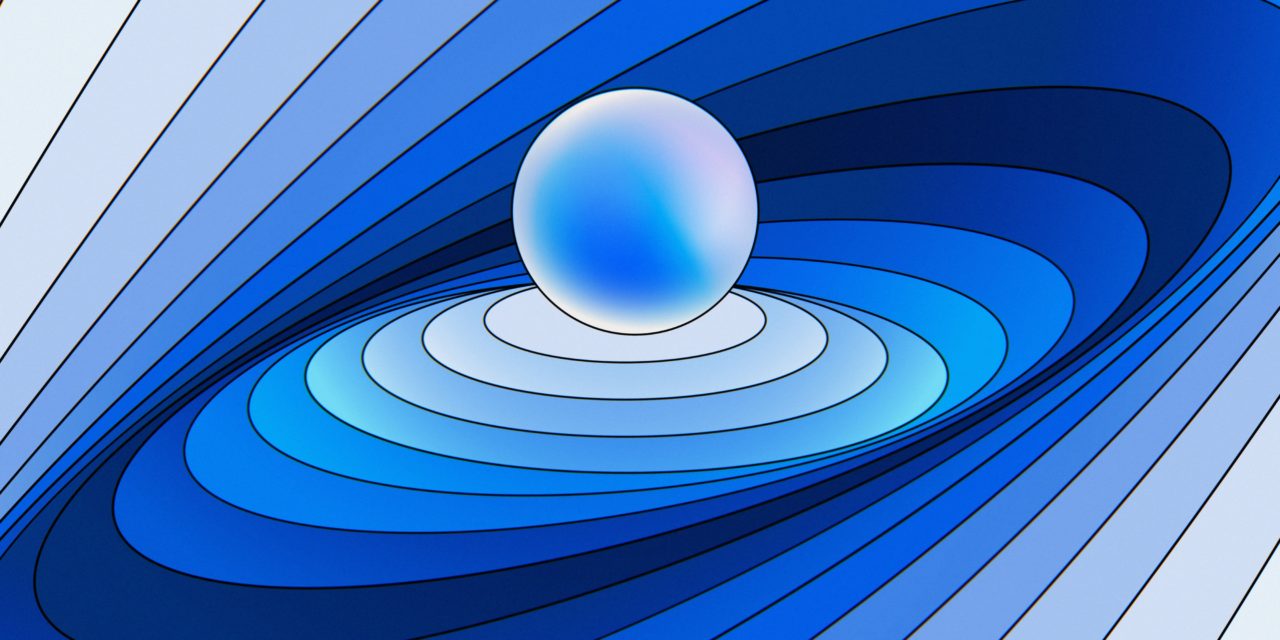

Images from DeepMind

Featured image:

“This represents how AI needs to take constant accountability. Various actions are occurring all around the centre orb which represents AI’s moral responsibilities. The orb centrepiece remains stable to make sure everything is balanced and functional.” Accountability. Artist: Champ Panupong Techawongthawon

other abstract images:

Deep Learning: Design focused on pattern recognition. Artist: Vincent Schwenk

Large Language Models. Artist: Tim West